Emulating the way the human visual cortex processes and visualises information, the system is ‘trained’ to identify a range of different visual signatures, classifying them based on common characteristics. This is achieved by incorporating machine learning techniques to improve detection and visualisation.

The training process involves presenting the machine with hundreds of images of a subject to analyse at a variety of angles, distances, and with different obstructions. Over time, this allows it to build up an understanding of how particular people, vehicles, or items should appear.

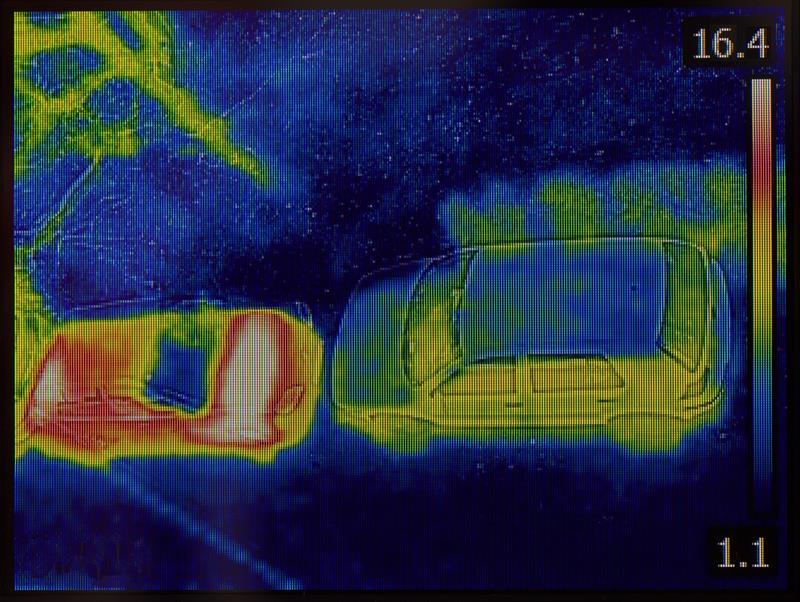

Still in its development stages, the system can already detect and classify humans and six different types of vehicles, including: saloon cars, pick-up trucks, 4x4s, vans, estate cars and people carriers.

The technology could find extensive application in a variety of industries, with the security, surveillance, engineering and construction sectors among those that could benefit most.

The technology could find extensive application in a variety of industries, with the security, surveillance, engineering and construction sectors among those that could benefit most.

“The system we’ve created is a unique piece of technology,” claims Dr Pablo Casaseca, senior lecturer in Signal and Image Processing at UWS. “It significantly enhances detection and classification capabilities on thermal imaging cameras at a long-range.”

If the system were to be presented with enough high-quality data, it could detect a host of objects with a very small number of pixels. The more training data presented to the machine over time, the better the system responds – even to objects or compositions it hasn’t encountered before.

“In security and surveillance fields, for example, the system could help staff monitor video feeds or footage,” says Dr Casaseca. “Observing security cameras over long periods of time can lower concentration levels. Our system could assist by pointing out objects of interest in the distance and suggest what they might bebefore they approach,” he says. “In construction or infrastructure, if a camera embedded with the technology was placed on a UAV or drone, it could identify small cracks on larger structures, such as a bridge, by detecting the defect and focusing on it.”

CENSIS brokered the relationship between UWS and Thales after conducting a technical landscape survey, identifying an academic partner for the company. The Innovation Centre, which recently announced its 50th project, aims to bring together Scotland’s academic institutions and industrial base.

CENSIS brokered the relationship between UWS and Thales after conducting a technical landscape survey, identifying an academic partner for the company. The Innovation Centre, which recently announced its 50th project, aims to bring together Scotland’s academic institutions and industrial base.

Dr Matt Kitchin, algorithms engineer at Thales in Glasgow, says: “The outcome of phase one has been encouraging – the classification results have yielded very high success rates over a wide range of imagery.”

Due to the findings and the potential opportunities the project has unveiled for both Thales and UWS, a second research and development phase has been launched. For this, Thales in Glasgow has appointed two algorithm engineers to work on the implementation of these machine learning algorithms and to help fine-tune and better understand how the collaboration can apply the technology in a range of industries.

Due to the findings and the potential opportunities the project has unveiled for both Thales and UWS, a second research and development phase has been launched. For this, Thales in Glasgow has appointed two algorithm engineers to work on the implementation of these machine learning algorithms and to help fine-tune and better understand how the collaboration can apply the technology in a range of industries.

CENSIS also provided project management support to Thales and UWS for the duration of phase one, and the three organisations have agreed to jointly fund a PhD at UWS as the project launches into phase two.

Gavin Burrows, project manager at CENSIS, says: “This project is a great example of what can be achieved when the right academic institution is paired with industry specialists. Thanks to their partnership, Thales and UWS have created a technology that could revolutionise detection and classification capabilities in a range of industries, placing them at the forefront of research and industrial applications in this area. We look forward to seeing the results of the next research and development stage.”

First on-road tests for self-driving Jaguar Land Rovers Another industry that could benefit from the kind of technology being developed by CENSIS, Thales and UWS is automotive – especially in autonomous vehicles. Jaguar Land Rover is the latest manufacturer undertaking its first UK road tests for autonomous and connected vehicles.

UK Autodrive is the largest of three consortia launched to support the introduction of self-driving vehicles into the UK. It is helping to establish the UK as a global hub for research, development and integration of automated and connected vehicles into society. The consortium has already proven these research technologies in a closed track environment and the start of real-world testing is the next step to turning the research into reality. Nick Rogers, executive director – product engineering at Jaguar Land Rover, says: “Testing this self-driving project on public roads is so exciting, as the complexity of the environment allows us to find robust ways to increase road safety in the future. By using inputs from multiple sensors, and finding intelligent ways to process this data, we are gaining accurate technical insight to pioneer the automotive application of these technologies.” With the launch of the trials, Coventry joins just 12 other cities in the world in conducting tests on public roads. The trials will continue through 2018. |

As part of the £20 million UK Autodrive project, Jaguar Land Rover is testing a range of research technologies on the roads of Coventry that will allow cars to communicate with each other as well as roadside infrastructure, such as traffic lights. The trials will also explore how future connected and autonomous vehicles can replicate human behaviour and reactions when driving.

As part of the £20 million UK Autodrive project, Jaguar Land Rover is testing a range of research technologies on the roads of Coventry that will allow cars to communicate with each other as well as roadside infrastructure, such as traffic lights. The trials will also explore how future connected and autonomous vehicles can replicate human behaviour and reactions when driving.